AI Cybersecurity Risks for Organizations: Insights from Chicago

What Organizations Are Asking About AI & Cybersecurity

Through ongoing discussions with organizations throughout the Chicago area, several consistent concerns are emerging around AI adoption and cybersecurity. In many cases, these concerns reflect broader industry trends. Because of this, this page focuses on AI cybersecurity risks for organizations and how leaders are responding in real-world environments.

Leaders in schools, park districts, libraries, and small to mid-sized businesses are also asking more strategic questions—not just about technology, but about risk, governance, and long-term impact. Because of this, understanding AI cybersecurity risks for organizations is becoming a priority across industries. In addition, many are exploring AI governance and cybersecurity strategy to better align innovation with risk management.

Furthermore, these conversations are not driven by technical teams alone. In addition, they increasingly involve cross-functional leadership perspectives. Moreover, this broad involvement accelerates decision-making. Instead, executive leadership, administrators, and operations teams are increasingly involved in decisions about how AI tools are introduced and managed.

AI Adoption Is Outpacing Security Awareness

To better understand these concerns, it is important to examine how AI adoption is accelerating and where risks begin to emerge.

Why AI Adoption Is Accelerating

One of the most common patterns observed is that AI adoption is happening faster than internal policies can keep up. Because of this, many organizations are exposed to AI cybersecurity risks without fully realizing it.

For example, teams are beginning to use AI-powered business tools for productivity, communication, and data analysis. However, there is often limited visibility into how these tools handle sensitive business data and cybersecurity risks. As usage expands, risks continue to grow. For example, new tools are introduced faster than policies can be updated. Consequently, gaps appear before controls are defined.

How Security Gaps Are Emerging

At the same time, organizations are unintentionally introducing new attack surfaces. In response, leadership teams must begin evaluating these risks more proactively. In many cases, this involves aligning AI usage with broader cybersecurity consulting and risk management strategies. As a result, organizations gain clearer visibility into emerging threats. Furthermore, they can prioritize mitigation efforts more effectively.

To better understand these concerns, it’s important to break down the most common AI cybersecurity risks organizations are facing today.

Common AI Cybersecurity Risks Organizations Are Facing

Key Risk Categories Organizations Should Understand

Based on real-world discussions, several recurring concerns continue to surface. These concerns directly relate to AI cybersecurity risks for organizations and highlight why proactive planning is essential. In addition, they emphasize the need for stronger governance and AI data security risk management.

Data Exposure

Sensitive information may be unintentionally shared through AI prompts and outputs, increasing AI cybersecurity risks for organizations. Learn how organizations mitigate this through cybersecurity consulting in Chicago.

Lack of Governance

Many organizations do not yet have clear policies controlling how AI tools are used. Because of this, gaps in oversight and accountability continue to grow.

Unclear Data Handling

Organizations often lack visibility into how AI platforms store, process, or retain data. In response, this introduces additional risk tied to AI data security risks.

AI-Enhanced Phishing

Cybercriminals are using AI to create more convincing phishing and social engineering attacks. As a result, threats are becoming harder to detect. Organizations often address this through phishing simulation training programs.

Shadow IT Expansion

Employees are adopting AI tools independently. In many cases, this leads to unmanaged technology usage and increased exposure. This is commonly addressed with managed IT services in Chicago that improve visibility and control.

These are not theoretical risks. In fact, many organizations are already experiencing early indicators of these challenges. Similarly, patterns are repeating across different industries. Instead, they reflect real uncertainty and growing pressure among leadership teams. In response, many organizations are beginning to prioritize awareness and governance.

Questions Leaders Are Asking Right Now

As these risks become more visible, leadership teams are beginning to ask more strategic questions.

In many organizations across Chicago, several key questions consistently arise. Many of these discussions begin with uncertainty around AI usage and risk. As awareness grows, leaders are beginning to focus more on governance and oversight.

“Do we know where our data is going when employees use AI tools?”

“What policies should we have in place before allowing AI usage?”

“How do we balance productivity gains with cybersecurity risks?”

“Are our current cybersecurity measures enough to handle AI-related threats?”

In response, organizations are shifting their focus toward governance, visibility, and long-term risk management, often supported by cybersecurity strategy consulting services. In addition, this shift is influencing how teams prioritize technology decisions.

Insights from the Field: What This Means for Organizations

These questions are leading to important realizations across industries. For example, many leaders are re-evaluating existing security frameworks.

Based on these discussions, several important takeaways have emerged regarding AI-related cybersecurity challenges. Often, these insights reflect patterns that are consistent across different industries and highlight how similar challenges are appearing across sectors.

AI Adoption Is Already Happing

Organizations are already using AI tools—whether formally approved or not. Because of this, risk is already present in many environments.

Security Strategies Are Lagging Behind

Traditional cybersecurity frameworks may not fully address the evolving risks introduced by AI technologies. In response, organizations must begin adapting their strategies to reduce exposure.

Leadership Teams Want Clarity

Decision-makers are not necessarily looking for more tools. Instead, they are seeking better understanding, clearer policies, and structured guidance for managing AI cybersecurity risks.

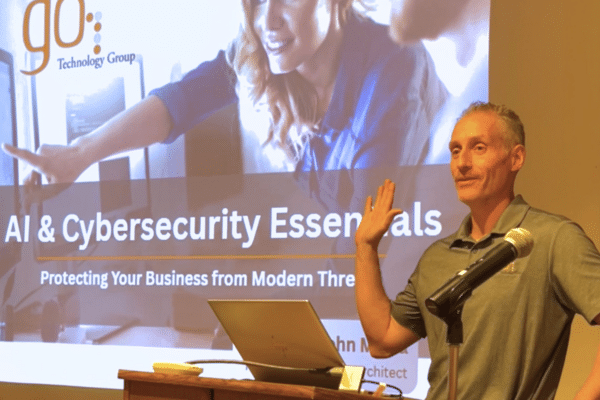

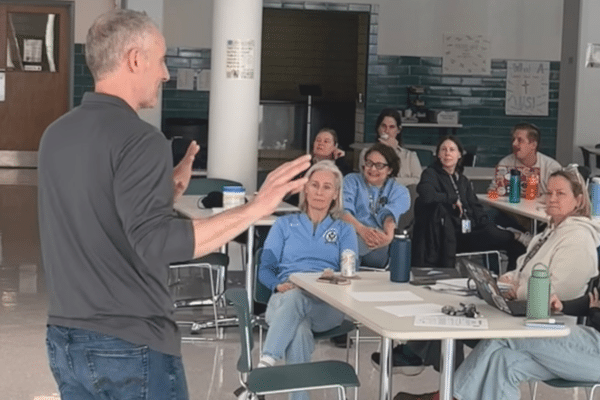

Where These Conversations Are Happening

These insights are drawn from presentations and discussions across a variety of community and professional environments. Because of this, they reflect real-world AI cybersecurity risks for organizations across multiple sectors.

Libraries

Community education environments exploring AI cybersecurity risks for organizations and public-facing technology use.

Schools

Education leaders evaluating AI cybersecurity risks in classrooms, including student data protection and device usage. Many institutions are also exploring student safety and device management solutions.

Park Districts

Public organizations assessing AI cybersecurity risks for operational systems and community services.

Businesses

Small to mid-sized organizations addressing AI cybersecurity risks in workflows, data security, and employee usage. These organizations often rely on IT consulting services in Chicago to guide implementation.

Each setting brings a different perspective. At the same time, common themes continue to emerge across environments. Therefore, shared approaches can be applied more broadly. However, the underlying concerns remain remarkably consistent across sectors.

Related Insights and Resources

To explore specific examples and deeper discussions, review these resources that expand on AI cybersecurity risks for organizations across schools, communities, and businesses. In addition, these insights highlight how organizations are addressing AI security risks in real-world environments.

AI and Cybersecurity Education in Wilmette

Explore how community organizations are addressing AI cybersecurity risks for organizations through real-world education and public engagement.

AI and Cybersecurity Awareness in Addison

See how local organizations are engaging in conversations around AI cybersecurity risks and building awareness through community-focused presentations.

AI and Cybersecurity Awareness in Arlington Heights

Learn how organizations are approaching AI cybersecurity risks through real-world discussions, leadership questions, and practical insights.

AI Cybersecurity Risks in K-12 Schools

Understand how education leaders are managing AI cybersecurity risks in schools, including student data protection, device usage, and policy development.

AI Cybersecurity Challenges for Park Districts

Explore how park districts are navigating AI cybersecurity risks for public organizations, from operational systems to community-facing services.

Benefits of Cybersecurity Awareness Training

Discover how employee training helps reduce AI cybersecurity risks for organizations by improving awareness, behavior, and risk prevention.

These resources provide deeper insight into how organizations are identifying and managing AI cybersecurity risks in real-world scenarios. In addition, they highlight practical approaches that can be adapted across industries. Because of this, they offer practical guidance that can be applied across different environments.

How Organizations Are Moving Forward

Through these conversations, a clear shift is taking place. As a result, organizations are taking more structured steps to address AI cybersecurity risks.

Organizations are moving from reactive approaches to more proactive strategies that emphasize. As a result, these efforts are becoming more structured and intentional:

Additionally, many are exploring how broader strategies like managed IT services in Chicago, IT consulting in Chicago, and cybersecurity consulting services can support a more structured approach to risk management.

If your organization is evaluating how to manage AI-related cybersecurity risks, a structured evaluation can help clarify next steps.

Continuing the Conversation

If these questions are coming up within your organization, you are not alone. Many organizations are encountering similar challenges as AI adoption increases.

In many Chicago-based organizations, leadership teams are actively working to better understand AI cybersecurity risks and determine what steps they should take next. At the same time, many teams are evaluating how their current strategies align with emerging risks. Additionally, organizations are beginning to reassess their current security strategies.

As these conversations continue to evolve, new insights will emerge. In addition, organizations will continue refining their approach to AI cybersecurity risks. In response, this page will continue to reflect what organizations are experiencing in real time.

If it would be helpful to continue the conversation, we are always open to connecting and sharing perspectives.

AI Cybersecurity Risks: Frequently Asked Questions

Common Questions About AI Cybersecurity Risks

What are the biggest AI cybersecurity risks for organizations?

The most common AI cybersecurity risks for organizations include data exposure through prompts and outputs, lack of governance over AI tool usage, unclear data handling practices, and increased sophistication in phishing and social engineering attacks. In addition, many organizations face challenges with shadow IT as employees adopt AI tools without formal oversight. Because of this, visibility and control become increasingly difficult to maintain.

How can organizations reduce AI cybersecurity risks?

Organizations can reduce AI cybersecurity risks by establishing clear usage policies, educating employees on responsible AI use, and incorporating AI considerations into their broader cybersecurity strategy. Because of this, leadership teams gain better visibility and control over how AI tools interact with sensitive data. In addition, ongoing training helps reinforce these practices over time.

Why is AI creating new cybersecurity challenges?

AI introduces new cybersecurity challenges because it changes how data is created, shared, and processed. For example, AI tools can unintentionally expose confidential information or be used to generate highly convincing phishing content. In response, traditional security frameworks may not fully address these evolving risks. This shift is leading to organizations must adapt their security strategies accordingly.

Do small and mid-sized organizations need to worry about AI security risks?

Yes, small and mid-sized organizations face many of the same AI cybersecurity risks as larger enterprises. In many cases, they may be more vulnerable due to limited internal resources or lack of formal governance structures. However, awareness and proactive planning can significantly reduce risk. Because of this, early action can make a meaningful difference.

What policies should organizations have in place for AI usage?

Organizations should consider policies that define acceptable AI use, data handling guidelines, approval processes for new tools, and employee training requirements. In addition, regular reviews of AI usage and risk exposure can help ensure policies remain effective over time. Furthermore, consistent enforcement is essential for long-term success.

How does AI impact existing cybersecurity strategies?

AI requires organizations to expand their cybersecurity strategies to include new forms of risk, such as data leakage through AI tools and AI-driven threat vectors. Because of this, cybersecurity planning must evolve to address both traditional threats and those introduced by AI technologies. In turn, this requires more proactive monitoring and policy alignment.

Are organizations in Chicago facing different AI cybersecurity risks?

While the core risks are consistent across regions, organizations across Chicago—including schools, libraries, and businesses—are actively navigating how to adopt AI responsibly within their specific operational environments. In response, local context often shapes how these risks are prioritized and addressed. At the same time, shared challenges continue to emerge across sectors.

PART OF THE ENDPOINT & THREAT DETECTION RESOURCE HUB

Endpoint & Threat Detection Strategies for Your Organization

Follow a structured approach to understand, evaluate, and implement proactive cybersecurity strategies that detect and contain threats before they disrupt operations.

Start with fundamentals, then evaluate your approach, apply protection strategies, and explore full solutions.

Understand the Fundamentals

Evaluate Your Endpoint Security Approach

Apply Proactive Cybersecurity Strategies

Explore Full Solutions

Designed to help organizations move from reactive IT to a proactive cybersecurity strategy.

Trusted By Leading Chicago Industries

See why our clients trust us to handle their most critical IT needs.

"GO managed the whole process and pushed on our vendors to find other means to get things done."

- Donna C. -

Office Leasing

"They explained technology so it was easy to understand-this gave me the confidence to make intelligent and effective business decisions."

- Earl F. -

Law Firm

"They have a huge range of knowledge which is great for problem solving our everyday issues with technology at a school."

- Brigid O. -

Education

Partners